Scrapy实战-新浪网分类资讯爬虫

时间:2019-05-16 15:06:08

收藏:0

阅读:137

-

项目要求:

-

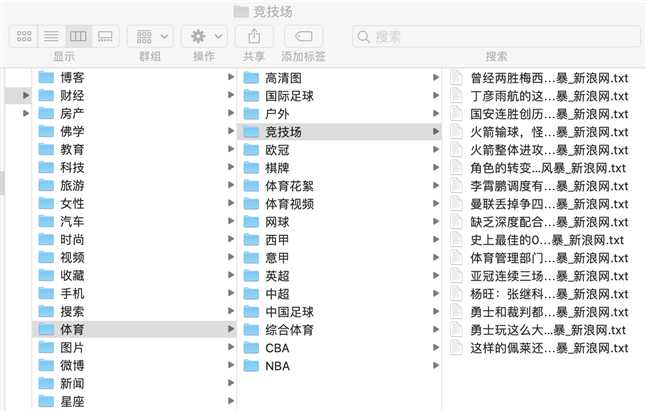

爬取新浪网导航页所有下所有大类、小类、小类里的子链接,以及子链接页面的新闻内容。

-

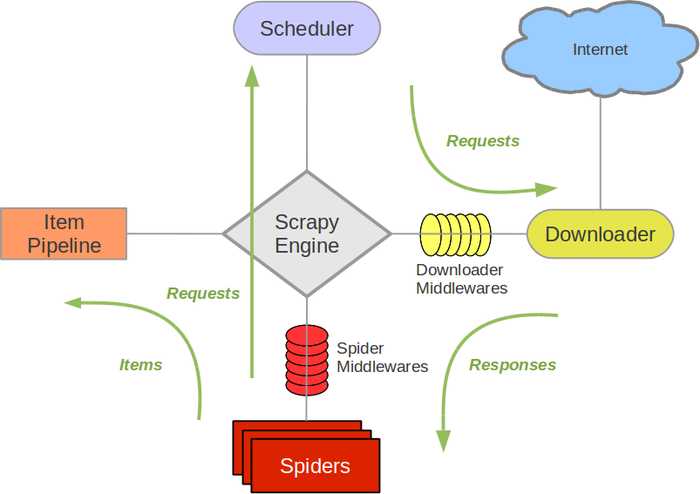

什么是Scrapy框架:

-

Scrapy是用纯Python实现一个为了爬取网站数据、提取结构性数据而编写的应用框架,用途非常广泛。

-

框架的力量,用户只需要定制开发几个模块就可以轻松的实现一个爬虫,用来抓取网页内容以及各种图片,非常之方便。

-

Scrapy 使用了 Twisted

[‘tw?st?d](其主要对手是Tornado)异步网络框架来处理网络通讯,可以加快我们的下载速度,不用自己去实现异步框架,并且包含了各种中间件接口,可以灵活的完成各种需求.

-

-

Scrapy架构图

-

制作Scrapy爬虫需要4个步骤:

-

- 新建项目 (scrapy startproject xxx):新建一个新的爬虫项目

- 明确目标 (编写items.py):明确你想要抓取的目标

- 制作爬虫 (spiders/xxspider.py):制作爬虫开始爬取网页

- 存储内容 (pipelines.py):设计管道存储爬取内容

-

开始实战:

-

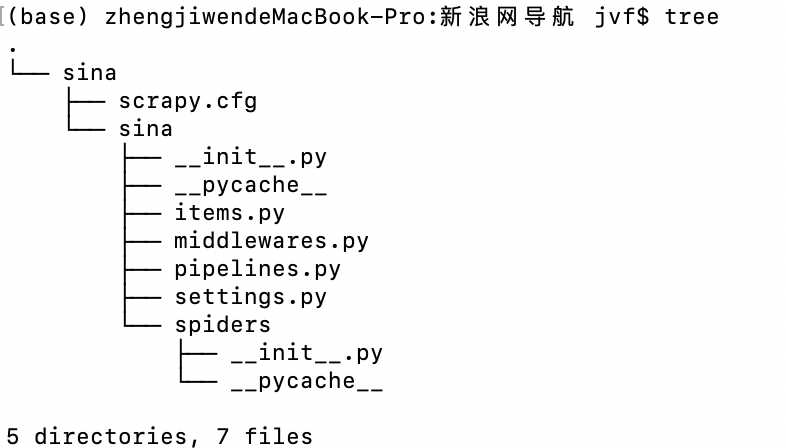

新建Scrapy项目

进入终端后,cd进入自定义的目录中,运行以下命令

scrapy startproject sina

成功创建项目

-

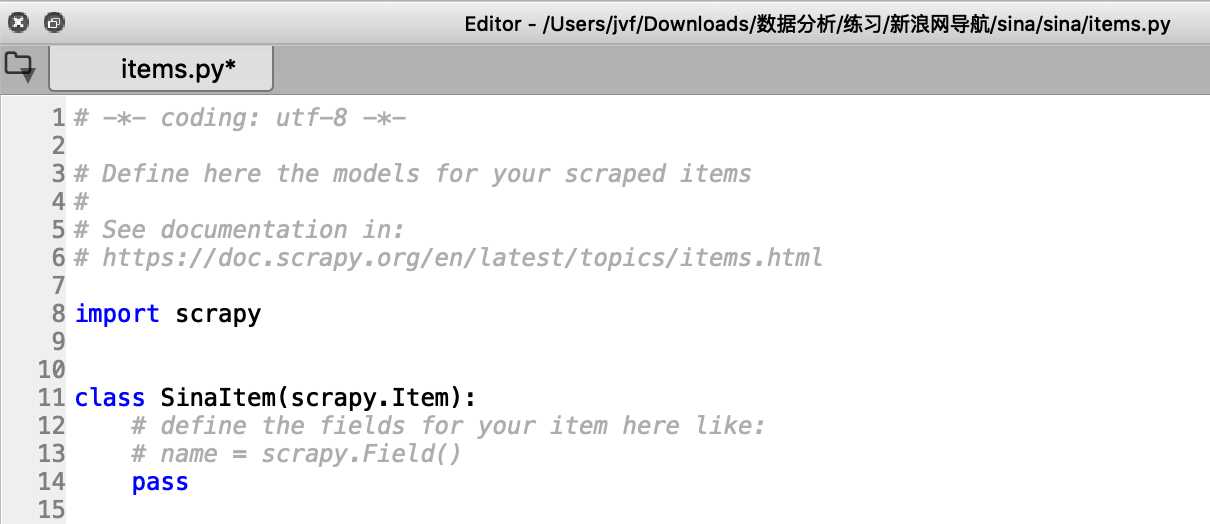

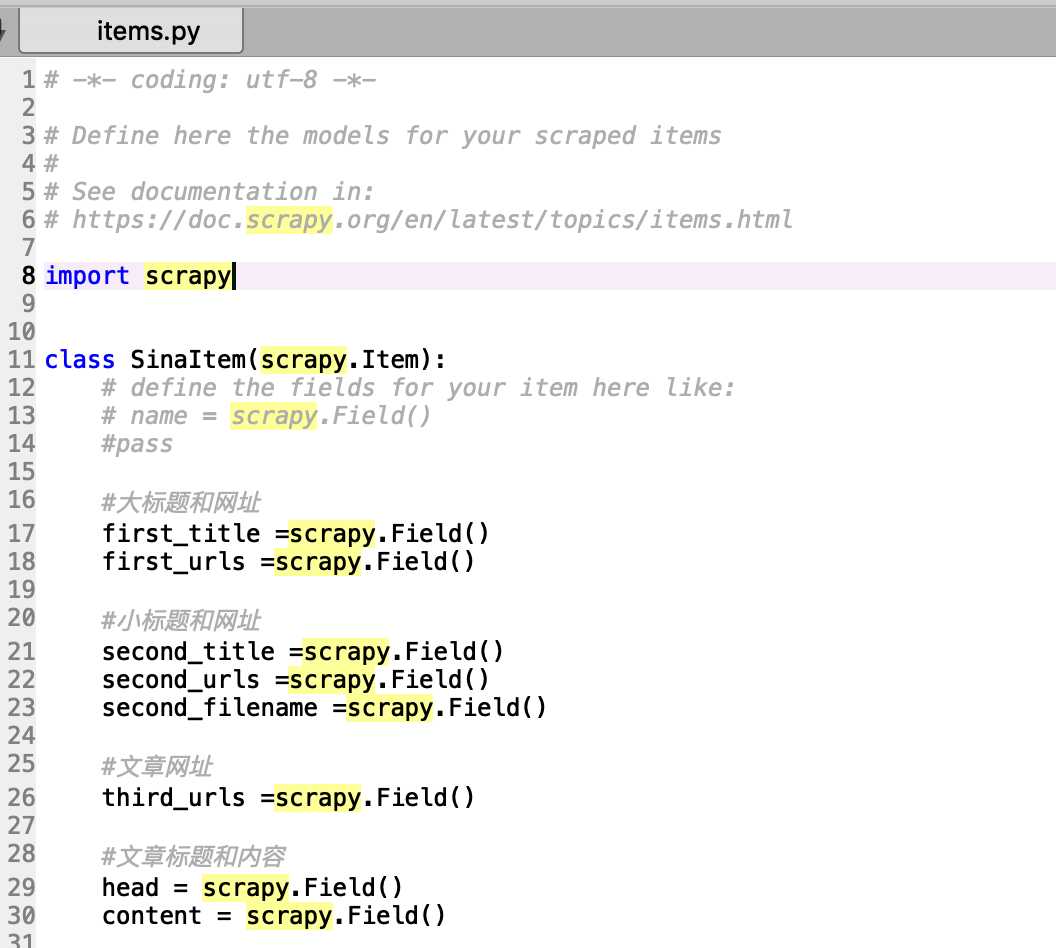

明确目标(编写items.py)明确你想要抓取的目标

-

接下来需要明确抓取的目标,编写爬虫

-

打开mySpider目录下的items.py,该文件下已自动为我们创建好scrapy.Item 类, 并且定义类型为 scrapy.Field的类属性来定义一个Item(可以理解成类似于ORM的映射关系)。

2.接下来,修改已创建好的SinaItem类,构建item模型(model)。

-

制作爬虫 (spiders/xxspider.py)

-

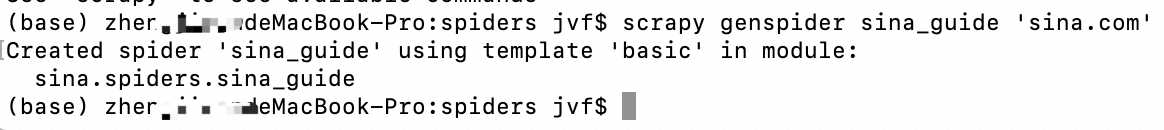

第三步就开始制作爬虫开始爬取网页

- 在当前目录下输入命令,将在sina/

sina/spiders目录下创建一个名为sina_guide的爬虫,并指定爬取域的范围:

scrapy genspider sina_guide ‘sina.com‘

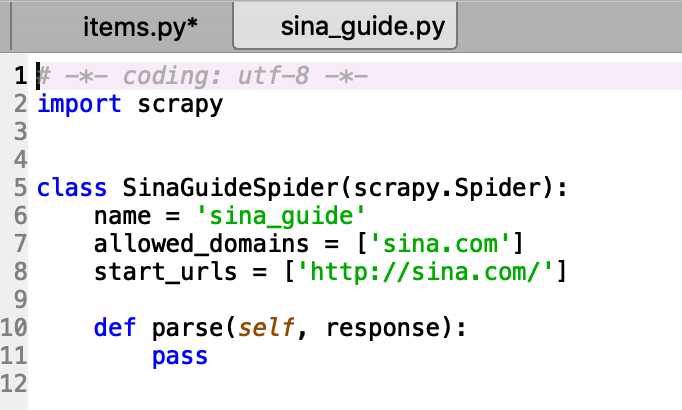

- 打开sina_guide.py文件,爬虫类也已创建好,默认爬虫名为‘sina_guide’,爬取范围为sina.com,起始网址为‘http://sina.com/’(需修改)

- 需要修改起始网址为 http://news.sina.com.cn/guide/,该导航网址下有众多一级标题,又细分为众多二级标题

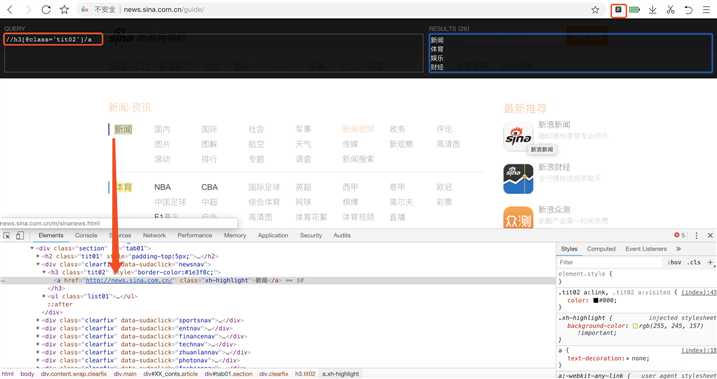

- 爬取所有大类标题及小类标题,右键点击‘审查元素’,可定位到该元素的地址,提取出XPATH地址(可使用xpath helper插件帮助定位生成)

1 def parse(self, response): 2 #items =[] 3 #所有大类标题和网址 4 first_title = response.xpath("//h3[@class=‘tit02‘]/a/text()").extract() 5 first_urls = response.xpath("//h3[@class=‘tit02‘]//@href").extract() 6 7 #所有小类标题和网址 8 second_title = response.xpath("//ul[@class=‘list01‘]/li/a/text()").extract() 9 second_urls = response.xpath("//ul[@class=‘list01‘]/li/a/@href").extract()

- 根据爬取到的标题名分层创建文件夹,

- 创建大类标题文件夹

1 def parse(self, response): 2 #items =[] 3 #所有大类标题和网址 4 first_title = response.xpath("//h3[@class=‘tit02‘]/a/text()").extract() 5 first_urls = response.xpath("//h3[@class=‘tit02‘]//@href").extract() 6 7 #所有小类标题和网址 8 second_title = response.xpath("//ul[@class=‘list01‘]/li/a/text()").extract() 9 second_urls = response.xpath("//ul[@class=‘list01‘]/li/a/@href").extract() 10 11 12 #爬取大类并指定文件路径 13 for i in range(0,len(first_title)): 14 15 item =SinaItem() 16 17 #指定大类工作路径和文件名 18 first_filename = "/Users/jvf/Downloads/数据分析/练习/0715-新浪网导航/DATA" + ‘/‘ + first_title[i] 19 20 #if判断,防止重复创建 21 #创建大类文件夹 22 if (not os.path.exists(first_filename)): 23 os.makedirs(first_filename) 24 25 #保存大类的标题和网址 26 item[‘first_title‘] = first_title[i] 27 item[‘first_urls‘] = first_urls[i]

-

- 创建二级标题文件夹

#爬取小类标题并指定文件路径 for j in range(0,len(second_urls)): if second_urls[j].startswith(first_urls[i]): second_filename =first_filename +‘/‘+ second_title[j] #if判断,防止重复创建文件夹 #创建文件夹,指定小类工作路径和文件名 if (not os.path.exists(second_filename)): os.makedirs(second_filename) #保存小类标题和网址 item[‘second_title‘] = second_title[j] item[‘second_urls‘] = second_urls[j] item[‘second_filename‘]=second_filename #items.append(item) b_filename =r"/Users/jvf/Downloads/数据分析/练习/0715-新浪网导航/DATA/111.txt" with open (b_filename,‘a+‘) as b: b.write(item[‘second_filename‘]+‘\t‘+item[‘second_title‘]+‘\t‘+item[‘second_urls‘]+‘\n‘) #发送每个小类url的Request请求,得到Response连同包含meta数据 一同交给回调函数 second_parse 方法处理 #for item in items: yield scrapy.Request(url = item[‘second_urls‘],meta={‘meta_1‘:copy.deepcopy(item)},callback=self.second_parse) #b_filename =r"/Users/jvf/Downloads/数据分析/练习/0715-新浪网导航/DATA/222.txt" #with open (b_filename,‘a+‘) as b: # b.write(item[‘second_filename‘]+‘\t‘+item[‘second_title‘]+‘\n‘) def second_parse(self,response): item = response.meta[‘meta_1‘] third_urls =response.xpath(‘//a/@href‘).extract() #items =[] for i in range(0,len(third_urls)): #检查每个链接是否以大类网址开头,shtml结束,结果返回TRue if_belong = third_urls[i].startswith(item[‘first_urls‘]) and third_urls[i].endswith(‘shtml‘) if (if_belong): ‘‘‘ item = SinaItem() item[‘first_title‘] = meta_1[‘first_title‘] item[‘first_urls‘] = meta_1[‘first_urls‘] item[‘second_title‘] = meta_1[‘second_title‘] item[‘second_urls‘] = meta_1[‘second_urls‘] item[‘second_filename‘]=meta_1[‘second_filename‘] ‘‘‘ item[‘third_urls‘] =third_urls[i] yield scrapy.Request(url=item[‘third_urls‘],meta={‘meta_2‘:copy.deepcopy(item)}, callback = self.detail_parse) #items.append(item) b_filename =r"/Users/jvf/Downloads/数据分析/练习/0715-新浪网导航/DATA/222.txt" with open (b_filename,‘a+‘) as b: b.write(item[‘second_filename‘]+‘\t‘+item[‘second_title‘]+‘\t‘+item[‘second_urls‘]+‘\n‘)

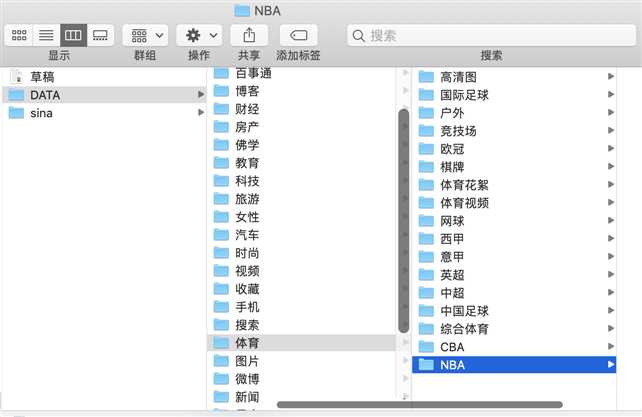

- 创建完成后即可得到文件夹按标题分类创建

- 创建文件夹后,便需要对内容进行采集并按文件夹存放

1 def detail_parse(self,response): 2 item =response.meta[‘meta_2‘] 3 4 #抓取标题 5 head = response.xpath("//li[@class=‘item‘]//a/text() | //title/text()").extract()[0] 6 #抓取的内容返回列表 7 content ="" 8 content_list = response.xpath(‘//div[@id=\"artibody\"]/p/text()‘).extract() 9 for i in content_list: 10 content += i 11 content = content.replace(‘\u3000‘,‘‘) 12 13 item[‘head‘]=head 14 item[‘content‘] =content 15 16 yield item 17 18 b_filename =r"/Users/jvf/Downloads/数据分析/练习/0715-新浪网导航/DATA/333.txt" 19 with open (b_filename,‘a+‘) as b: 20 b.write(item[‘second_filename‘]+‘\t‘+item[‘second_title‘]+‘\t‘+item[‘second_urls‘]+‘\n‘)

-

存储内容 (pipelines.py)

-

配置pipelines.py

1 import random 2 3 class SinaPipeline(object): 4 def process_item(self, item, spider): 5 # head=item[‘head‘] 6 # filename =‘/‘ + str(random.randint(1,100))+r‘.txt‘ 7 # f = open(item[‘second_filename‘]+filename,‘w‘) 8 f = open(item[‘second_filename‘] + ‘/‘ + item[‘head‘]+r‘.txt‘,‘w‘) 9 f.write(item[‘content‘]) 10 f.close() 11 12 13 b_filename =r"/Users/jvf/Downloads/数据分析/练习/0715-新浪网导航/DATA/444.txt" 14 with open (b_filename,‘a‘) as b: 15 b.write(item[‘second_filename‘]+‘\t‘+item[‘second_title‘]+‘\t‘+item[‘second_urls‘]+‘\n‘) 16 17 18 return item

-

在终端执行爬虫文件:

scrapy crawl sina_guide

完

附上完整sina_guide.py以供参考:

1 # -*- coding: utf-8 -*- 2 3 ####注意scrapy.Request中meta参数深拷贝的问题!!!!!!! 4 #https://blog.csdn.net/qq_41020281/article/details/83115617 5 import copy 6 #import os 7 #os.chdir(‘/Users/jvf/Downloads/数据分析/练习/0715-新浪网导航/sina/sina‘) 8 import sys 9 sys.path.append(‘/Users/jvf/Downloads/数据分析/练习/0715-新浪网导航/sina‘) 10 #print(sys.path) 11 12 import scrapy 13 import os 14 from sina.items import SinaItem 15 16 class SinaGuideSpider(scrapy.Spider): 17 name = ‘sina_guide‘ 18 allowed_domains = [‘sina.com.cn‘] 19 start_urls = [‘http://news.sina.com.cn/guide/‘] 20 21 22 def parse(self, response): 23 #items =[] 24 #所有大类标题和网址 25 first_title = response.xpath("//h3[@class=‘tit02‘]/a/text()").extract() 26 first_urls = response.xpath("//h3[@class=‘tit02‘]//@href").extract() 27 28 #所有小类标题和网址 29 second_title = response.xpath("//ul[@class=‘list01‘]/li/a/text()").extract() 30 second_urls = response.xpath("//ul[@class=‘list01‘]/li/a/@href").extract() 31 32 33 #爬取大类并指定文件路径 34 for i in range(0,len(first_title)): 35 36 item =SinaItem() 37 38 #指定大类工作路径和文件名 39 first_filename = "/Users/jvf/Downloads/数据分析/练习/0715-新浪网导航/DATA" + ‘/‘ + first_title[i] 40 41 #if判断,防止重复创建 42 #创建大类文件夹 43 if (not os.path.exists(first_filename)): 44 os.makedirs(first_filename) 45 46 #保存大类的标题和网址 47 item[‘first_title‘] = first_title[i] 48 item[‘first_urls‘] = first_urls[i] 49 50 51 #爬取小类标题并指定文件路径 52 for j in range(0,len(second_urls)): 53 54 if second_urls[j].startswith(first_urls[i]): 55 second_filename =first_filename +‘/‘+ second_title[j] 56 57 #if判断,防止重复创建文件夹 58 #创建文件夹,指定小类工作路径和文件名 59 if (not os.path.exists(second_filename)): 60 os.makedirs(second_filename) 61 62 #保存小类标题和网址 63 item[‘second_title‘] = second_title[j] 64 item[‘second_urls‘] = second_urls[j] 65 item[‘second_filename‘]=second_filename 66 67 #items.append(item) 68 69 b_filename =r"/Users/jvf/Downloads/数据分析/练习/0715-新浪网导航/DATA/111.txt" 70 with open (b_filename,‘a+‘) as b: 71 b.write(item[‘second_filename‘]+‘\t‘+item[‘second_title‘]+‘\t‘+item[‘second_urls‘]+‘\n‘) 72 73 #发送每个小类url的Request请求,得到Response连同包含meta数据 一同交给回调函数 second_parse 方法处理 74 #for item in items: 75 yield scrapy.Request(url = item[‘second_urls‘],meta={‘meta_1‘:copy.deepcopy(item)},callback=self.second_parse) 76 77 78 #b_filename =r"/Users/jvf/Downloads/数据分析/练习/0715-新浪网导航/DATA/222.txt" 79 #with open (b_filename,‘a+‘) as b: 80 # b.write(item[‘second_filename‘]+‘\t‘+item[‘second_title‘]+‘\n‘) 81 82 def second_parse(self,response): 83 item = response.meta[‘meta_1‘] 84 third_urls =response.xpath(‘//a/@href‘).extract() 85 86 #items =[] 87 88 for i in range(0,len(third_urls)): 89 90 #检查每个链接是否以大类网址开头,shtml结束,结果返回TRue 91 if_belong = third_urls[i].startswith(item[‘first_urls‘]) and third_urls[i].endswith(‘shtml‘) 92 if (if_belong): 93 ‘‘‘ 94 item = SinaItem() 95 item[‘first_title‘] = meta_1[‘first_title‘] 96 item[‘first_urls‘] = meta_1[‘first_urls‘] 97 item[‘second_title‘] = meta_1[‘second_title‘] 98 item[‘second_urls‘] = meta_1[‘second_urls‘] 99 item[‘second_filename‘]=meta_1[‘second_filename‘] 100 ‘‘‘ 101 item[‘third_urls‘] =third_urls[i] 102 yield scrapy.Request(url=item[‘third_urls‘],meta={‘meta_2‘:copy.deepcopy(item)}, 103 callback = self.detail_parse) 104 #items.append(item) 105 106 b_filename =r"/Users/jvf/Downloads/数据分析/练习/0715-新浪网导航/DATA/222.txt" 107 with open (b_filename,‘a+‘) as b: 108 b.write(item[‘second_filename‘]+‘\t‘+item[‘second_title‘]+‘\t‘+item[‘second_urls‘]+‘\n‘) 109 110 111 #for item in items: 112 113 114 115 def detail_parse(self,response): 116 item =response.meta[‘meta_2‘] 117 118 #抓取标题 119 head = response.xpath("//li[@class=‘item‘]//a/text() | //title/text()").extract()[0] 120 #抓取的内容返回列表 121 content ="" 122 content_list = response.xpath(‘//div[@id=\"artibody\"]/p/text()‘).extract() 123 for i in content_list: 124 content += i 125 content = content.replace(‘\u3000‘,‘‘) 126 127 item[‘head‘]=head 128 item[‘content‘] =content 129 130 yield item 131 132 b_filename =r"/Users/jvf/Downloads/数据分析/练习/0715-新浪网导航/DATA/333.txt" 133 with open (b_filename,‘a+‘) as b: 134 b.write(item[‘second_filename‘]+‘\t‘+item[‘second_title‘]+‘\t‘+item[‘second_urls‘]+‘\n‘) 135 136 137 138

原文:https://www.cnblogs.com/jvfjvf/p/10873900.html

评论(0)